- Home

- About Us

- Work

- Journal

- Contact

- What are the step up movies about

- Mcafee safari freezing mac

- Set up visual novel reader english translation

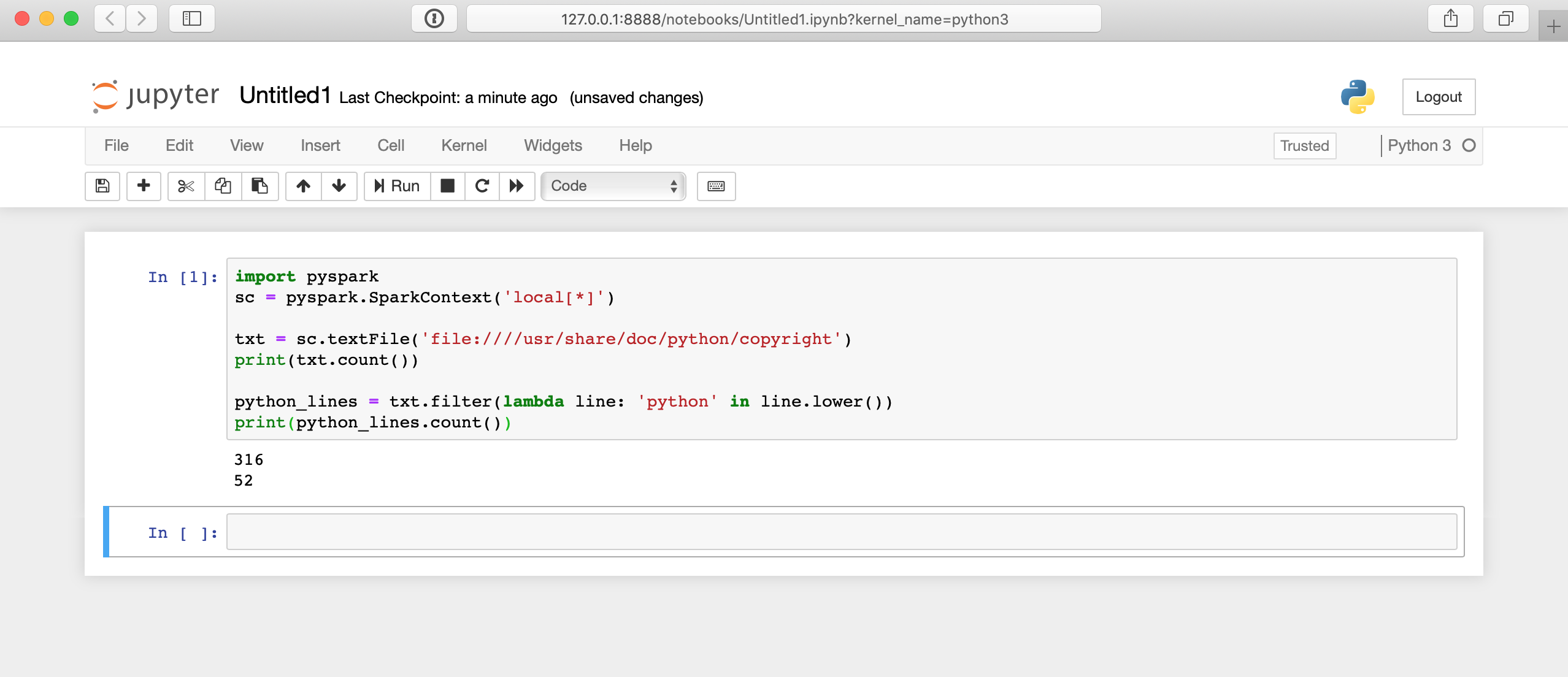

- How to install pyspark anaconda

- Best note taking apps for windows

- The sims 4 free download full version for windows 8

- Used rosetta stone french for sale

- Scp driver windows 10 ps3 controller no motioninjoy

- Freeware graphing software

- Home

- About Us

- Work

- Journal

- Contact

- What are the step up movies about

- Mcafee safari freezing mac

- Set up visual novel reader english translation

- How to install pyspark anaconda

- Best note taking apps for windows

- The sims 4 free download full version for windows 8

- Used rosetta stone french for sale

- Scp driver windows 10 ps3 controller no motioninjoy

- Freeware graphing software

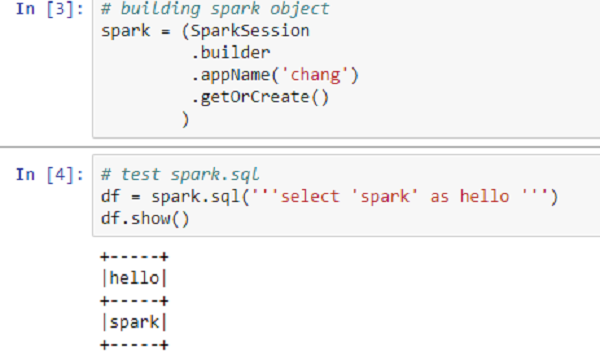

However, when you have huge dataset(in tera bytes or giga bytes), there are some things that your computer is not powerful enough to perform.

This machine works perfectly well for applying machine learning on small dataset. Typically when you think of a computer you think about one machine sitting on your desk at home or at work. Internet powerhouses such as Netflix, Yahoo, and eBay have deployed Spark at massive scale, collectively processing multiple petabytes of data on clusters of over 8,000 nodes. This presents new concepts like nodes, lazy evaluation, and the transformation-action (or ‘map and reduce’) paradigm of programming.In fact, Spark is versatile enough to work with other file systems than Hadoop - like Amazon S3 or Databricks (DBFS). So, Spark is not a new programming language that you have to learn but a framework working on top of HDFS. This allows Python programmers to interface with the Spark framework - letting you manipulate data at scale and work with objects over a distributed file system. Spark is implemented on Hadoop/HDFS and written mostly in Scala, a functional programming language.However, for most beginners, Scala is not a great first language to learn when venturing into the world of data science.įortunately, Spark provides a wonderful Python API called PySpark.

Apache Spark is one of the hottest and largest open source project in data processing framework with rich high-level APIs for the programming languages like Scala, Python, Java and R.